AI Music Is Good But Not Great. Can We Fix That?

I generated 230 AI songs across 10 genres, built a model to rate them, and used the data to 4x the hit rate of producing good tracks. All on a MacBook.

AI music generators can now produce full songs—vocals, instruments, structure—in about two minutes on a laptop. I’ve been playing with ACE-Step 1.5 and the output is genuinely impressive. But there’s a problem: most of it lands in this zone of competent mediocrity. It sounds like music. It has all the right parts. But it doesn’t move you.

I generated 230 songs across 10 genres and 6 languages. Maybe 10% were songs I’d actually want to listen to again. The rest were technically fine but emotionally flat—like elevator music that went to music school.

So I built a system to fix the hit rate.

The Idea: Score Everything, Keep the Best

What if you had a model that could rate songs automatically? Generate a batch, score them all in seconds, keep the top scorers. A learned quality filter for music.

How It Works

The architecture is deliberately simple. Two components:

MERT is a 330M parameter model pretrained on 160,000 hours of music. It compresses any song into 1024 numbers capturing rhythm, harmony, timbre, and structure. I freeze it and use it as a feature extractor.

The MLP scorer is four linear layers trained in 30 seconds on 8,000 songs from the Free Music Archive.

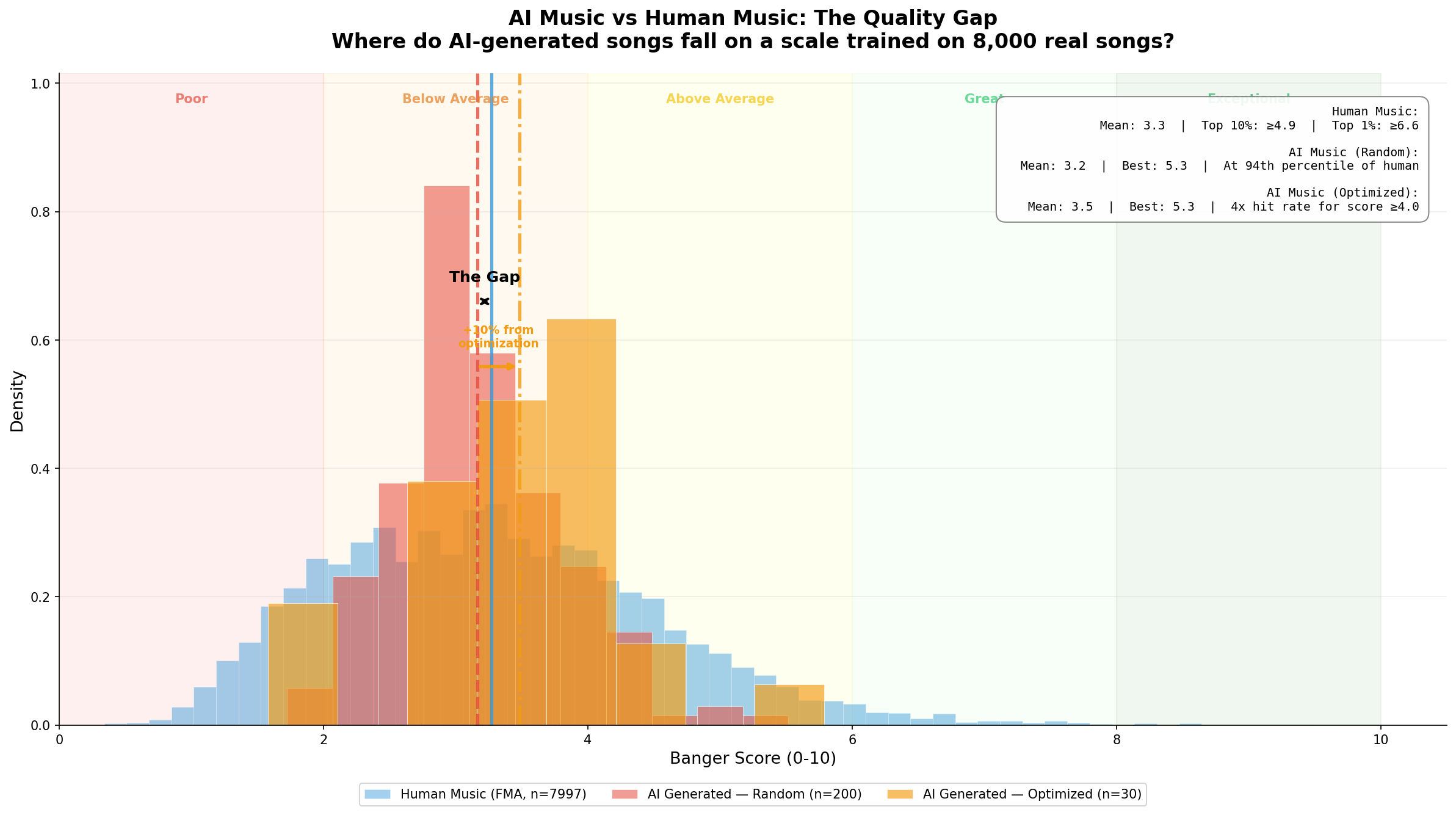

AI vs Human Music: The Quality Gap

Here’s what happens when you score AI-generated music on a scale trained on real human music:

AI songs cluster at the FMA median of 3.2. Real music has a long tail of truly great songs reaching 8–10. The best AI track (5.29, melodic techno) sits at the 67th percentile. Above average, not exceptional.

Can you tell which is the banger?

Listen to both songs on HuggingFace, then pick which one scored higher.

Which one scored higher?

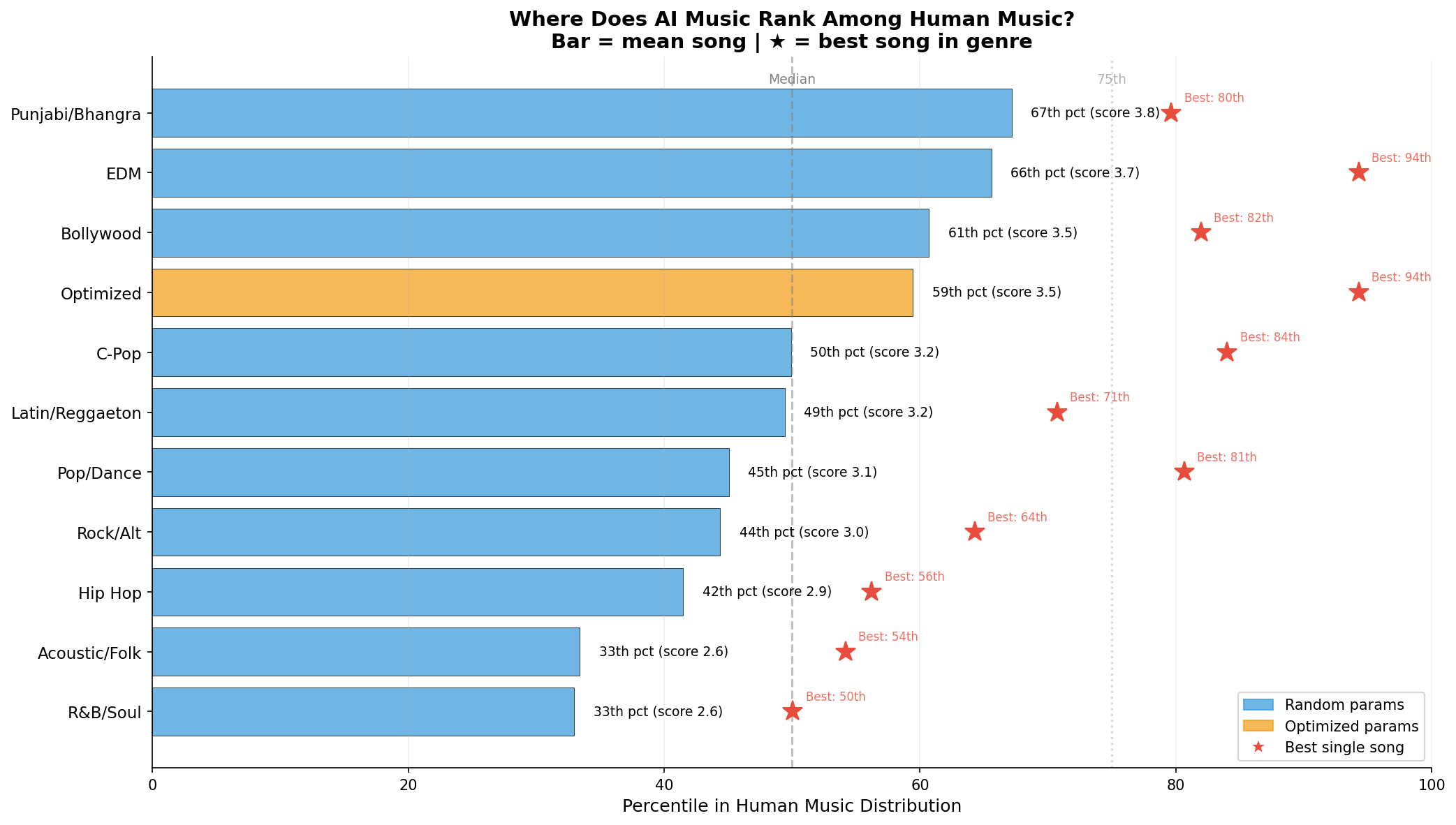

200 Songs, 10 Genres, 6 Languages

I tested across Hip Hop, Pop, R&B, Latin, Bollywood, Punjabi, C-Pop, Rock, EDM, and Folk. Twenty songs each, varied BPMs and keys.

Pop quiz

Which genre had the highest mean score across 20 songs? (Not the highest single song — the most consistently good.)

EDM dominated with the highest single score (5.29). Punjabi/Bhangra had the highest mean (3.77). R&B and Folk scored lowest—both require emotional subtlety AI can’t deliver yet.

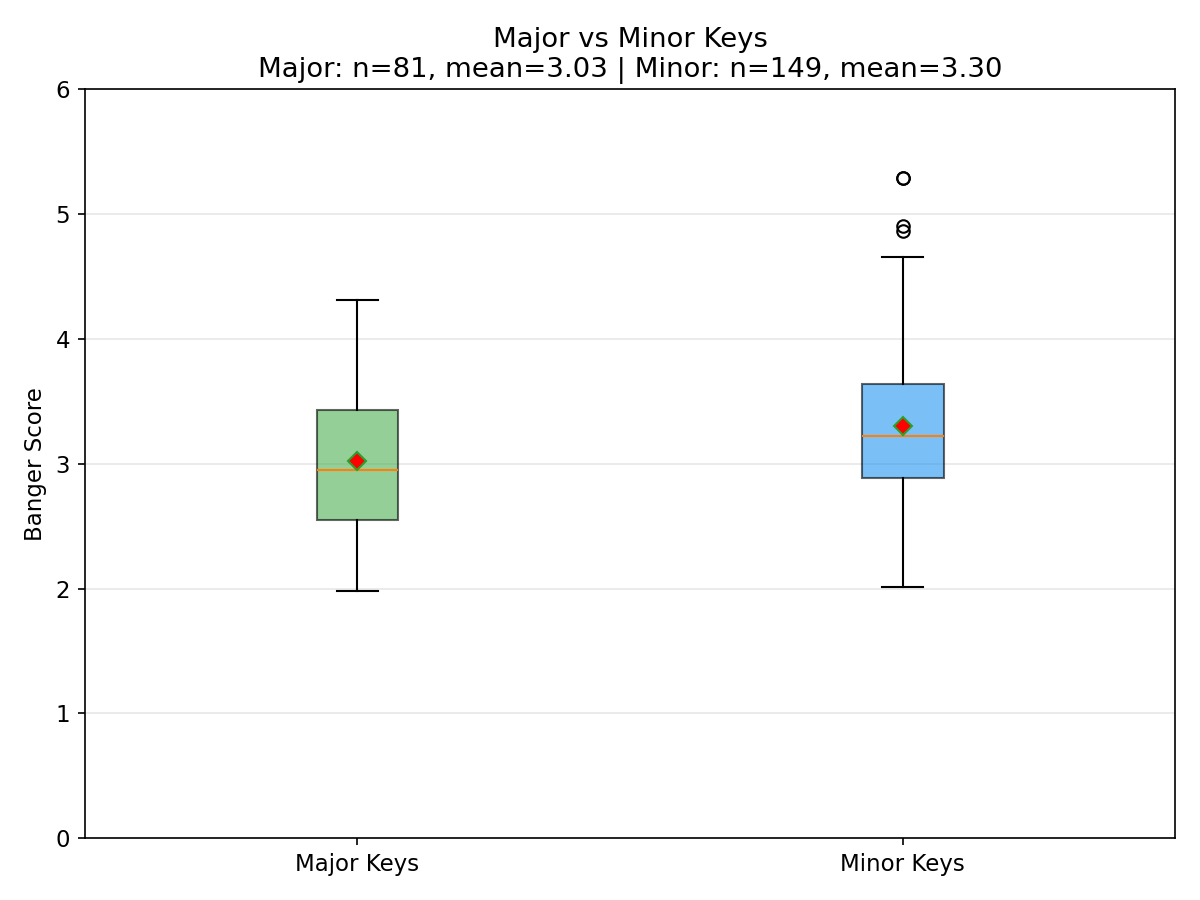

Minor keys beat major keys (3.26 vs 3.03). All top-5 songs were in minor keys.

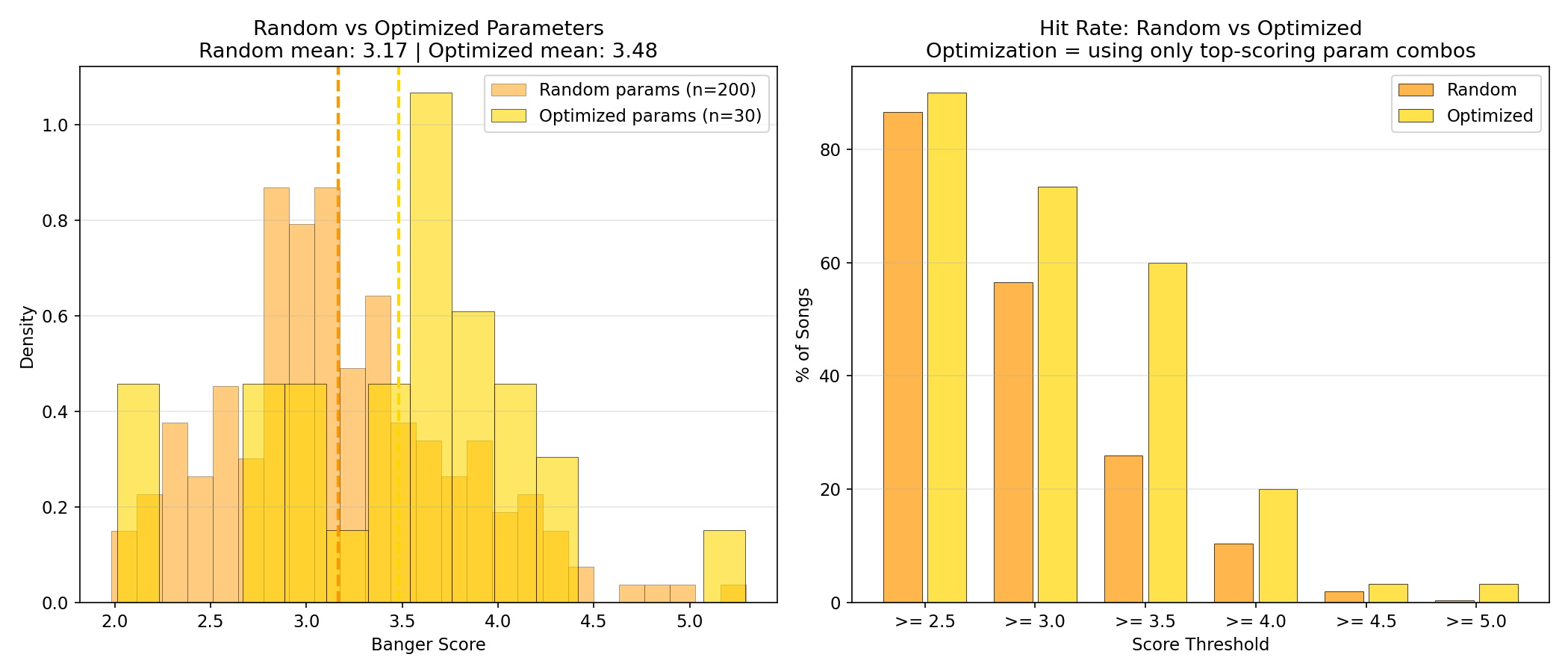

The Optimization: 4x Hit Rate

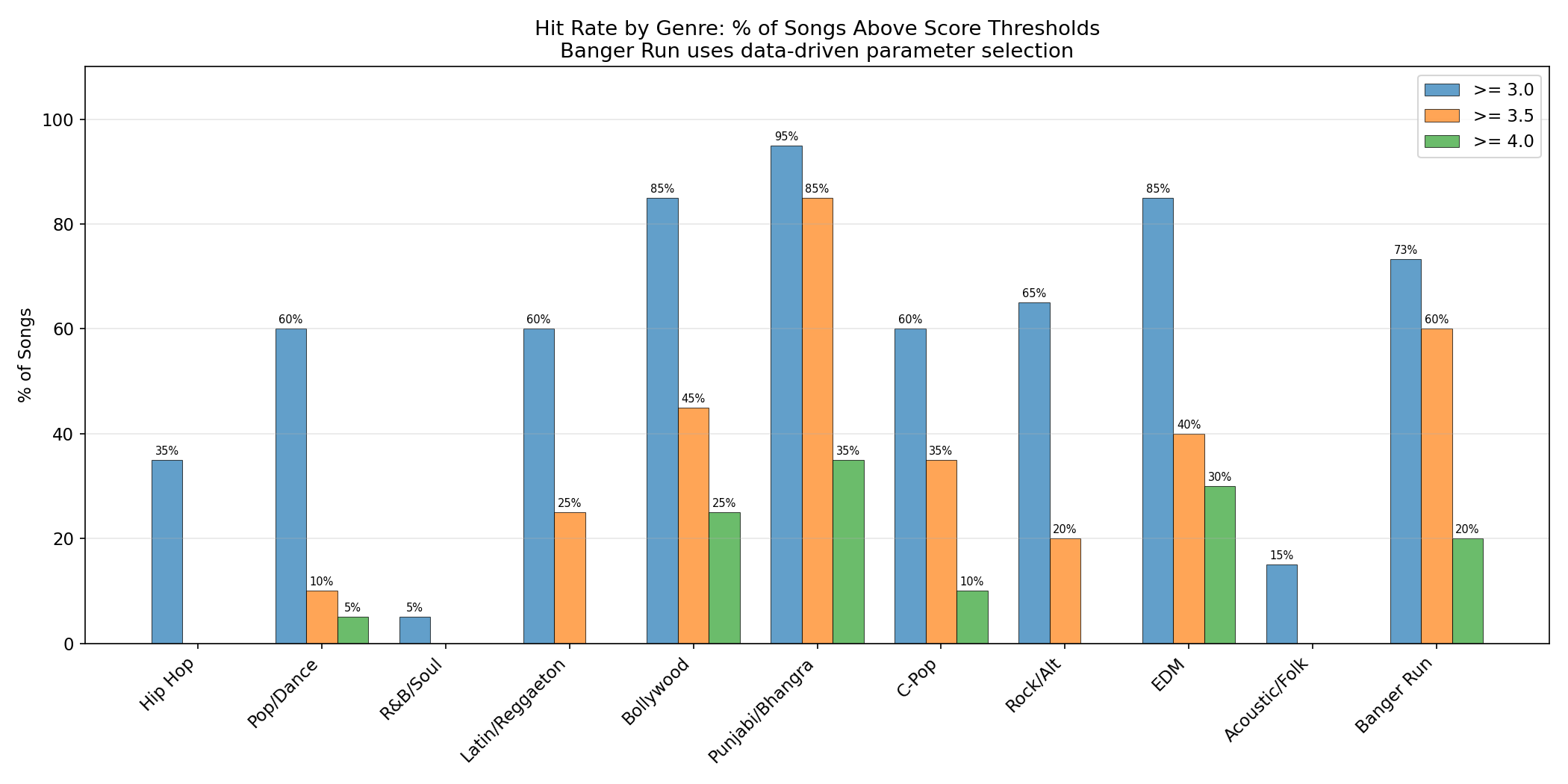

I analyzed which parameters scored highest, then generated 30 more songs using only the winning combos. Toggle to see the difference:

Random vs Optimized

Random parameters

20% of songs score 3.5+

Listen for Yourself

All 230 songs are playable on HuggingFace:

Try It Yourself

Upload any song and get a banger score. Runs in your browser via HuggingFace Spaces:

What This Means Beyond Music

The pattern generalizes to any generative AI pipeline:

- Build a cheap scorer using transfer learning.

- Generate a batch and score everything.

- Feed scores back into generation parameters.

Surprises

The scorer trained in 30 seconds. Transfer learning made the hard part trivial.

The genre bias was huge. Same generator, wildly different quality by genre.

Local hardware is enough. Everything ran on a MacBook Pro M4 Pro. No cloud GPUs.

What’s Next

AI music needs to get better. The scorer is a bridge—filtering helps now, but long term it can serve as a reward model to RL-tune the generator itself.

If you can score it, you can optimize it. And you can build a useful scorer with surprisingly little.